Memleak

Dynamically validating static memory leak warnings

This site presents our memory leak validation tool and experiment data.

SEG (Software Engineering Group) , Nanjing University

Introduction

Memory leaks have significant impact on software availability, performance and security. Static analysis has been widely used to find memory leaks in C/C++ programs. Although a static analysis is able to find all potential leaks in a program, it often reports a great number of false warnings. Manually validating these warnings is a daunting task, which significantly limits the practicality of the analysis. So we develop a novel dynamic technique that automatically validates and categorizes such warnings to unleash the power of static memory leak detectors. Our technique analyzes each warning that contains information regarding the leaking allocation site and the leaking path, generates test cases to cover the leaking path, and tracks objects created by the leaking allocation site. Eventually, warnings are classified into four categories: MUST-LEAK, LIKELY-NOT-LEAK, BLOAT, and MAY-LEAK.

Warning classification

Warnings are classified into four categories: MUST-LEAK, LIKELY-NOT-LEAK, BLOAT, and MAY-LEAK.

Warnings in MUST-LEAK are guaranteed by our analysis to be true leaks. Warnings in LIKELY-NOT-LEAK are highly likely to be false warnings. Although we cannot provide any formal guarantee that they are not leaks, we have high confidence that this is the case. Warnings in BLOAT are also not likely to be leaks but they should be fixed to improve performance. Using our approach, the developer's manual verification effort needs to be focused only on warnings in the category MAY-LEAK, which is often much smaller than the original set.

Analysis algorithm

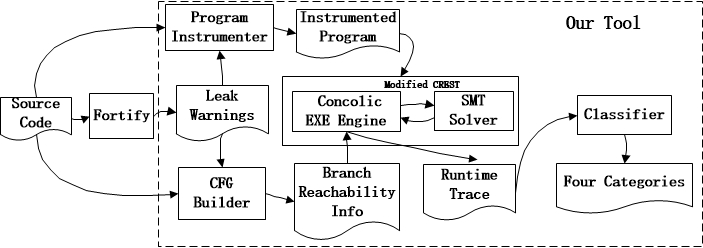

Figure above shows an overview of our tool.

Given a static leak warning w = (a, p, e), two pre-processing phases are performed prior to the test case generation. In the first phase, the target program is instrumented. The inserted code serves two major purposes: (1) it declares symbolic variables and marks the path fragment p in the source code for the subsequent path-directed concolic testing; (2) it tracks the usage of each run-time object created by the reported allocation site a and updates its tracking data. The second phase consists of a path reachability analysis, which is performed on the CFG of the program to determine, for each control flow branch, whether an execution following the branch could potentially reach each branch on p. This analysis is straightforward: it combines an intraprocedural control-dependence analysis with an interprocedural call graph traversal. The concolic testing engine is then modified to be aware of this reachability information so that the test case generation is guided to explore only the paths that may reach p, leading to a reduced search space in the symbolic execution. The

tracking data for each tracked object is inspected at the end of the execution to classify the warning.

Experiment

Our instrumentation was performed using the CIL instrumentation framework. The CREST concolic testing engine was modified to perform the path-guided test case generation. The static memory leak detector used in our experiments was HP Fortify version 3.2.

Experimental Subjects

We perform our first and third experiment using a set of programs from the Siemens and the coreutils benchmark suites. These programs are relatively small and it is thus easy for us to understand their implementation logic to manually verify our classification results and overhead. To increase the number of static analysis warnings for each program, we manually injected both true and false leaks. The injected benchmark can download here, including inject information table.

The programs are as follows:

| Program | Lines | Description |

| replace | 563 | Replace pattern |

| print_tokens | 726 | Lexical analysis |

| print_tokens2 | 569 | Lexical analysis |

| tcas | 173 | Collision avoidance |

| wc | 802 | Print newline, word, and byte counts for each file |

| cat | 785 | Concatenate and write files |

| head | 1063 | Output the first part of files |

| tr | 1949 | Translate or delete characters |

| expand | 433 | Convert tabs to spaces |

| unexpand | 535 | Convert spaces to tabs |

The second experiment includes a case study on the use of our tool to validate leaks for a large-scale application (i.e.,texinfo-4.33).

Results

Experiment 1:

Raw data for experiment 1 can download here.| Program | #L | #W | #S | #Must | #LNL | #B | #May | #F | T0(s) | T1(s) | Sp0(Mb) | Sp1(Mb) |

| replace | 563 | 18 | 3444 | 5 | 3 | 4 | 6 | 0 | 3.82 | 3.95 | 16.4 | 20.4 |

| print_tokens | 726 | 22 | 17383 | 8 | 4 | 6 | 4 | 0 | 3.7 | 5.0 | 16.9 | 20.6 |

| print_tokens2 | 569 | 29 | 16943 | 8 | 7 | 9 | 5 | 0 | 4.2 | 4.7 | 22.1 | 22.2 |

| tcas | 173 | 8 | 54 | 1 | 4 | 1 | 2 | 0 | 0.06 | 0.07 | 15.6 | 19.8 |

| wc | 802 | 8 | 6000 | 2 | 2 | 2 | 2 | 0 | 10.5 | 15.5 | 17.1 | 22.8 |

| cat | 785 | 8 | 4002 | 2 | 1 | 2 | 3 | 0 | 16.9 | 26.2 | 15.7 | 19.9 |

| head | 1063 | 18 | 5007 | 4 | 6 | 2 | 6 | 0 | 12.2 | 17.4 | 16.3 | 20.5 |

| tr | 1949 | 32 | 37281 | 11 | 8 | 8 | 5 | 0 | 21.2 | 26.5 | 16.8 | 21.2 |

| expand | 433 | 6 | 3854 | 1 | 1 | 2 | 2 | 0 | 22.3 | 26.9 | 21.8 | 25.9 |

| unexpand | 535 | 6 | 3996 | 1 | 1 | 2 | 2 | 0 | 25.7 | 26.2 | 23.1 | 27.2 |

#L: Lines of code;

#W: Numbers of warnings reported by Fortify;

#S: Numbers of test cases generated by the path-guided concolic testing

#Must: MUST-LEAK; #LNL: LIKELY-NOT-LEAK; #B: BLOAT; #May: MAY-LEAK;

#F: False Classifications;

T0 T1:Running times;

Sp0 Sp1:Peak memory consumptions.

Experiment 2:

Fortify reports a total of 91 warnings for texinfo. Our analysis has successfully classified 70 of them. For the rest 21 of the warnings, no test case can be generated by CREST to exercise their reported path fragments and thus they are classified as MAY-LEAK. This is primarily because the path constraints for these warnings are too complicated to solve. Among the 70 warnings that are precisely classified, the numbers of warnings in MUST-LEAK, LIKELY-NOT-LEAK, and BLOAT are, respectively, 69, 1, and 0.

Experiment 3:

We use Valgrind to compare and evaluate our method performance and overhead. The benmark is same with experiment1.

| Program | #L | #LP | #LP0 | #LP1 | #S | #TC | T0(s) | T1(s) | Sp0(Mb) | Sp1(Mb) |

| replace | 563 | 7 | 5 | 7 | 3444 | 5542 | 30.77 | 4968.12 | 549.9/20.4 | 28.9 |

| print_tokens | 726 | 4 | 4 | 4 | 17383 | 4130 | 32.16 | 3737.74 | 545/20.6 | 29.3 |

| print_tokens2 | 569 | 2 | 2 | 2 | 16943 | 4115 | 30 | 3735.83 | 551.1/22.2 | 27.9 |

| tcas | 173 | 2 | 1 | 2 | 54 | 1608 | 24.53 | 1424.58 | 552.6/19.8 | 27.9 |

| wc | 802 | 1 | 1 | 1 | 6000 | 835 | 48.44 | 1807 | 565.7/22.8 | 80.9 |

| cat | 785 | 2 | 2 | 2 | 4002 | 123 | 57.99 | 262 | 567.6/19.9 | 79.8 |

| head | 1063 | 4 | 4 | 4 | 5007 | 909 | 50.97 | 2002 | 540.9/20.5 | 80.9 |

| tr | 1949 | 6 | 6 | 5 | 37281 | 1593 | 64.4 | 3430 | 565.5/21.2 | 80.7 |

| expand | 433 | 2 | 1 | 2 | 3854 | 2578 | 55.95 | 5965 | 544.4/25.9 | 83.5 |

| unexpand | 535 | 2 | 1 | 2 | 3996 | 1560 | 56.09 | 3435 | 544.8/27.2 | 83.1 |

#L: Lines of code;

#LP: Number of leak points ;

#LP0 #LP1: Number of leak points found by our method and Valgrind;

#S: Numbers of test cases generated by the path-guided concolic testing;

#TC:Number of test cases used by Valgrind;

T0 T1:Running times;

Sp0 Sp1:Memory consumptions;

PS: Overhead of our method includes the static analysis stage, memory consumption data like 549.9/20.4Mb means that static analysis uses 549.9Mb memory and concolic testing uses 20.4Mb memory.

Support or Contact

If you have any questions or suggestions, please contact us.